Collected from multiple major online newspapers in Vietnam (VnExpress, Tuổi Trẻ, Thanh Niên). To maintain balance, we limited the dataset to a subset.

🔗 Source📚 01. Dataset Overview

🔍 02. Exploratory Data Analysis (EDA)

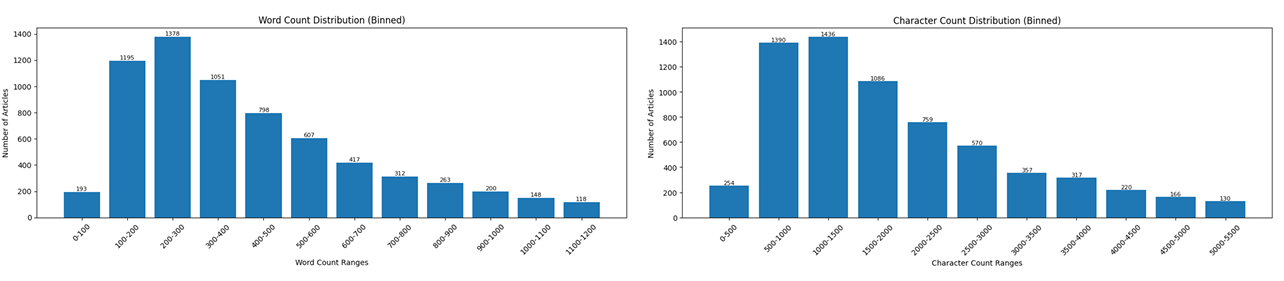

The distribution of word and character counts is right-skewed (long tail). The Đời sống (Lifestyle) class is the longest on average (588 words), while Vi tính (IT) is the shortest (372 words).

"Quảng Đông sẽ giết khoảng 10.000 cầy hương vì SARS. Trung Quốc vừa đưa ra thông báo trên, ngay sau khi các nhà khoa học thuộc Đại học tổng hợp Hong Kong phát hiện có điểm tương đồng về gene giữa virus..."

⚙️ 03. Setup & Preprocessing Pipeline

- Word Segmentation: All texts are preprocessed using

VnCoreNLPto correctly group Vietnamese compound words (e.g., converting "học sinh" to "học_sinh"). - Subword Tokenization: Apply Byte Pair Encoding (BPE) to tokenize text into subwords, generating integer sequences optimized for the model architectures.

- Padding/Truncation: Input sequences are truncated or padded to a strict maximum length of 128 tokens.

# Import necessary libraries from transformers import AutoTokenizer from pyvi import ViTokenizer # 1. Word Segmentation (Grouping compound words) raw_text = "Tàu du lịch cao tốc Cần Thơ" segmented_text = ViTokenizer.tokenize(raw_text) # Output: 'Tàu du_lịch cao_tốc Cần_Thơ' # 2. Load Pretrained PhoBERT Tokenizer tokenizer = AutoTokenizer.from_pretrained("vinai/phobert-base") # 3. Subword Tokenization, Padding & Truncation encoded_input = tokenizer( segmented_text, padding='max_length', truncation=True, max_length=128, return_tensors='pt' )

🏗️ 04. Model Building Pipeline

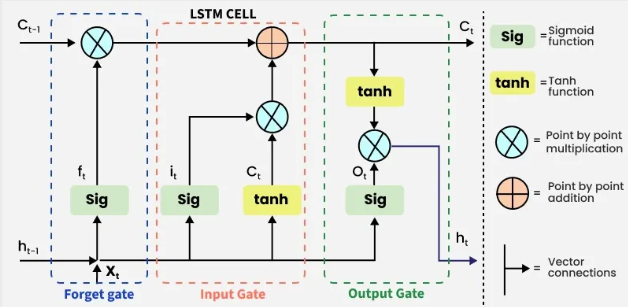

RNN: LSTM (Trained from scratch)

Contains an Embedding layer (dim=300), an LSTM layer (hidden=256), Dropout (0.3), and a FC layer. Trained with Adam (LR=1e-3) for 5 Epochs with Batch Size 16.

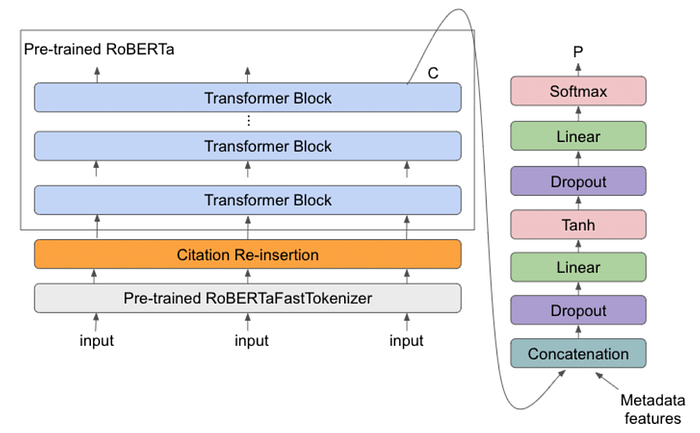

Transformer: PhoBERT (Fine-tuned)

Based on RoBERTa architecture. To improve efficiency and prevent overfitting, we froze the transformer encoder and only trained the classification head. Trained with AdamW (LR=1e-3) for just 2 Epochs with Batch Size 8.

# Load the pre-trained PhoBERT model model = AutoModelForSequenceClassification.from_pretrained("vinai/phobert-base", num_labels=10) # Freeze all layers in the base RoBERTa encoder to save compute and prevent overfitting for param in model.roberta.parameters(): param.requires_grad = False # Only the weights of the classification head (classifier layer) will be updated for param in model.classifier.parameters(): param.requires_grad = True

🔄 Data Pipeline & Tensor Shapes

Below is the specialized data pipeline detailing the Tensor dimensions (Batch Size is denoted as B) and the semantic meaning of the data at each step.

(Batch, 10).

🏆 05. Evaluation & Results

Note: Evaluated on the test split (approx. 20% of the active dataset).

Performance Comparison (Test Set)

| Model | Accuracy | F1-Score | Train Time (s) | Inference Time (s) |

|---|---|---|---|---|

| LSTM | 0.6429 | 0.6354 | ⚡ 410 | ⚡ 23.27 |

| PhoBERT (Frozen Head) | 🏆 0.8341 | 🏆 0.8274 | 5856 | 2956 |

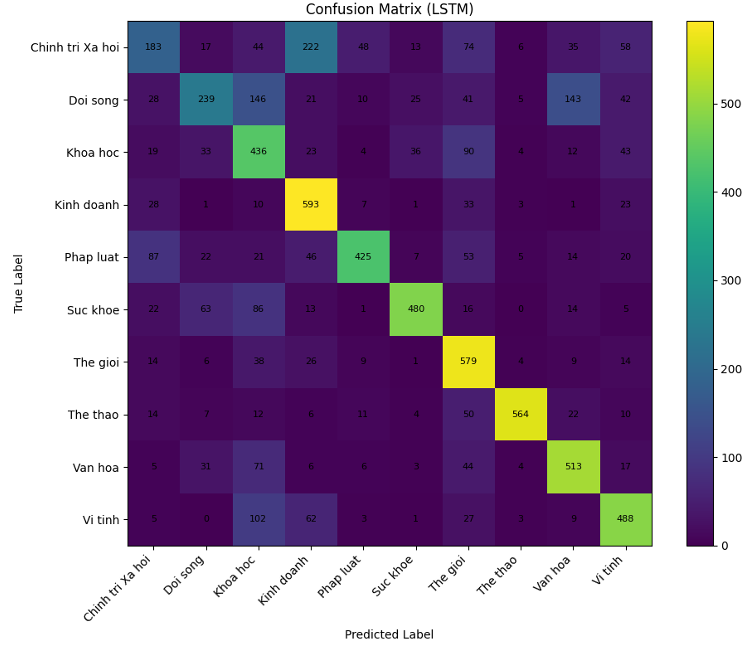

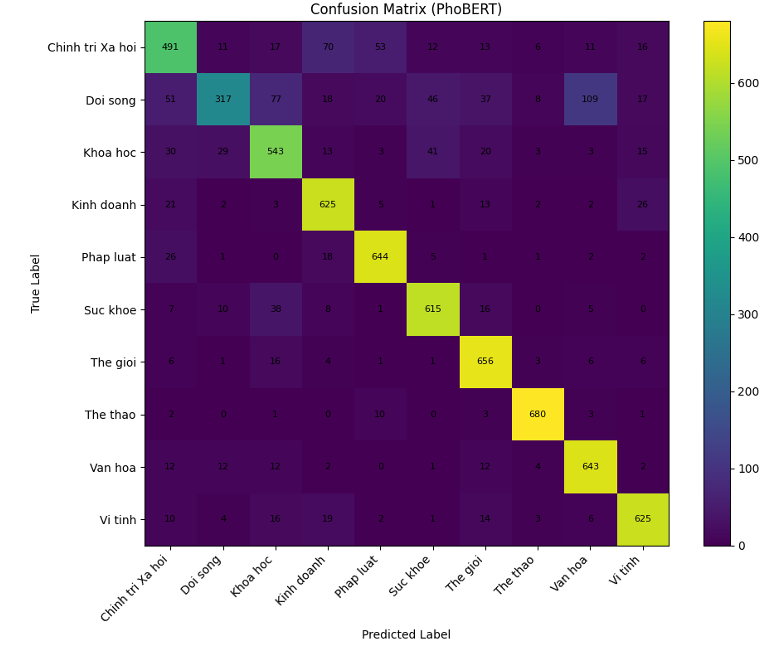

Confusion Matrices

* Click on any matrix to view in full screen.

LSTM (Trained from scratch)

PhoBERT (Fine-tuned)

🔬 06. Error Analysis

Common Confusions

By analyzing the Confusion Matrices above, we noticed both models struggle with multi-topic or ambiguous articles where semantic overlap exists. Here are the most frequent misclassifications:

- LSTM: Chính trị Xã hội ➔ Kinh doanh (222 errors)

- LSTM: Đời sống ➔ Khoa học / Văn hóa

- PhoBERT: Đời sống ➔ Văn hóa (109 errors)

- PhoBERT: Chính trị Xã hội ➔ Pháp luật